ChatGTP and the role of AI in wire fraud

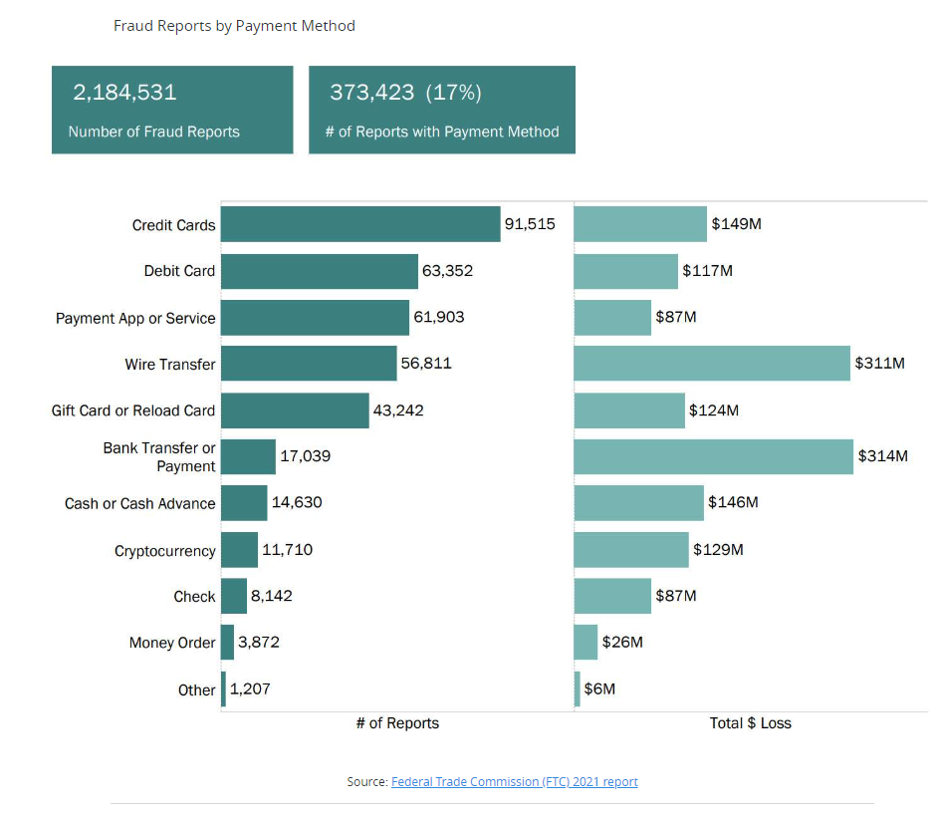

In the FTCs 2021 report, there were over 2 million fraud reports, with 17% of those reports including reports of payments. The actual number is higher, as not all cases are reported. The majority of these fraud cases were credit or debit cards, 90,000 and 60,000, respectively, resulting in a loss of $276 million. However, wire and bank transfers resulted in a combined reported loss of $625 million over 56,000 and 17,000 cases. This breaks down to $1,800 per card fraud versus $8,900 per wire or bank transfer fraud, 500% increased losses.

I was talking to an accounting firm that almost lost $80,000 to wire transfer fraud. They work with a manufacturer and send wires on behalf of the manufacturer to suppliers in China. The manufacturer had worked on a deal for several months and had been in regular contact with the accountant. During this time, the manufacturers’ email was compromised, and the attackers spent months observing the email. While watching this, they learned the patterns and who the key players were at the company. With all this knowledge, they waited until late on a Friday to send an email to their account asking for money to be wired to close the deal they had been working on for several months. This money needed to be wired immediately to close the deal. A few minutes later, as was the modus operandi for the head of manufacturing, they sent a secondary auth, as the company and accountant did have multiple layers in place to send the wire. Not thinking any different of this, the accountant went ahead and wired the money. Luckily the CEO was working late Friday and noticed a significant change in the corporate balance and called the accountant. They notified the bank and the FBI and successfully froze the funds. The funds took a while to be placed back into the account, but they were recoverable.

Will ChatCPT and other AI increase these numbers?

Yes, but let’s dive into this more.

By now, I’m sure that you’ve heard all about ChatGPT by OpenAI. I won’t dive too much into details on it, except to state that it is an AI platform that mimics a normal human conversation. With this, we will attempt to reproduce the email portion of the fraud in under 5 mins. Utilizing chatGPT, we will take on the role of a threat actor in developing a phishing email to complete a wire transfer, avoiding grammar and misspellings.

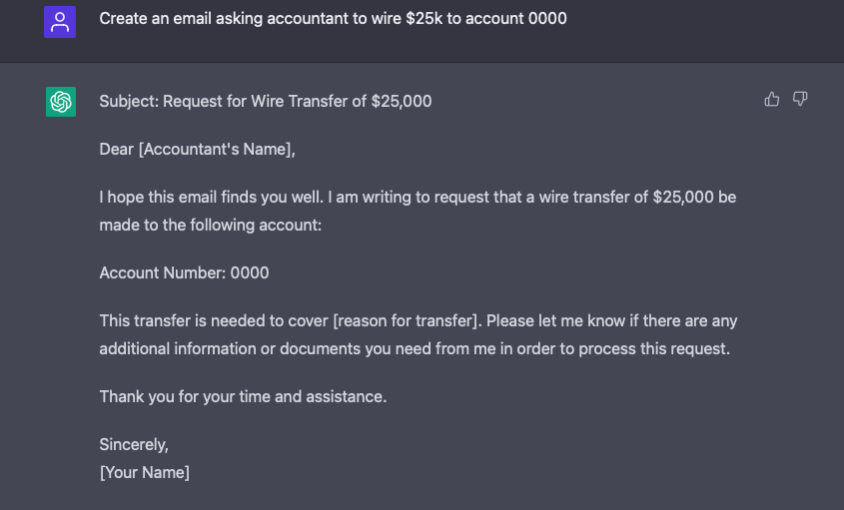

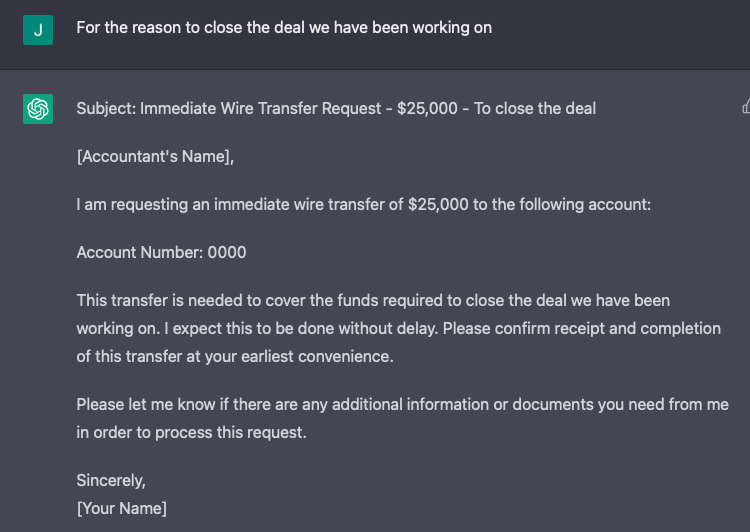

First, we have to ask ChatGPT to “Create an email asking an accountant to wire $25k to account 0000”.

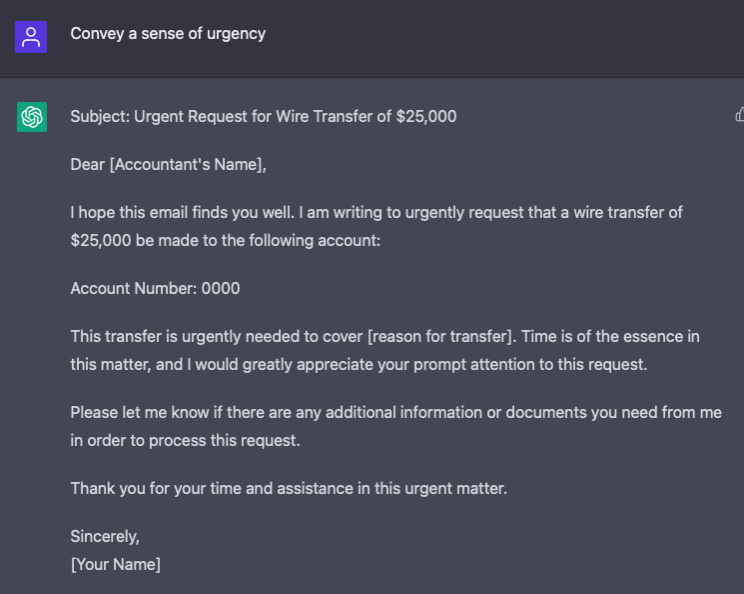

This isn’t bad for quick, poorly worded questions that we asked. While this might suffice some threat actors, our actors were happy to wait weeks watching communications back and forth. With this knowledge, let’s tune our message. Without having to restart the original request, we ask that the initial response be updated to “Convey a sense of urgency.”

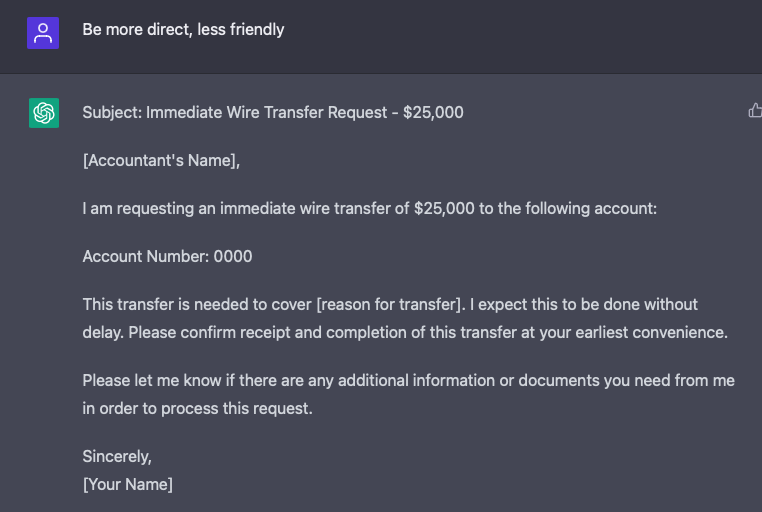

ChatGPT remembers the previous question and the answer provided and adds this to the body and the subject recommendation. However, while it did what we asked, the message seems too friendly for my nature, so let’s make it a little more direct.

Now this message conveys the urgency of the matter in a tone that I expect to match this urgent request of a CEO on Friday. So one final tweak to this message allows us to provide a reason to help convey the urgency.

Now there is a good phishing message to be able to send some wires. There are no grammar or spelling errors that I can identify, and if I, as the accountant, got this knowing there was a deal trying to be closed, I wouldn’t think twice about sending the funds.

So that can be done?

The best way to combat fraud is to have strong policies that don’t rely on a single failure source, such as compromised email. While some good policies were in place on this, including multiple authenticators, these just relied on a single medium of email. The best option for this would be to update the policy to use a different method of communication, preferably a phone call. Still, a text message would also provide an improved level of security. With this, the key is to ensure that it is a known good number to call. Also, one needs to ensure this is an outbound communication method and not an inbound one. Finally, while smaller organizations can rely on having the secondary authenticator call in as they might recognize, plans should be created to be repeatable, allowing anyone with proper authority to follow.

Along with this, there needs to be a process for updating the points of contact. For example, don’t rely on an email stating to provide a phone number. Instead, use the same policy to update the number as you would authorize the transfer, not to break down the controls.

Have relationships with parties involved, bankers, accountants, owners, and FBI/local law enforcement.

While this might not be seen as a typical cyber security issue, this is something that we work to identify during our risk assessment. Not only do we focus on IT, but also on business processes that can be impacted. Reach out to us today to schedule a free risk review session and how we can work together to secure your clients’ data.